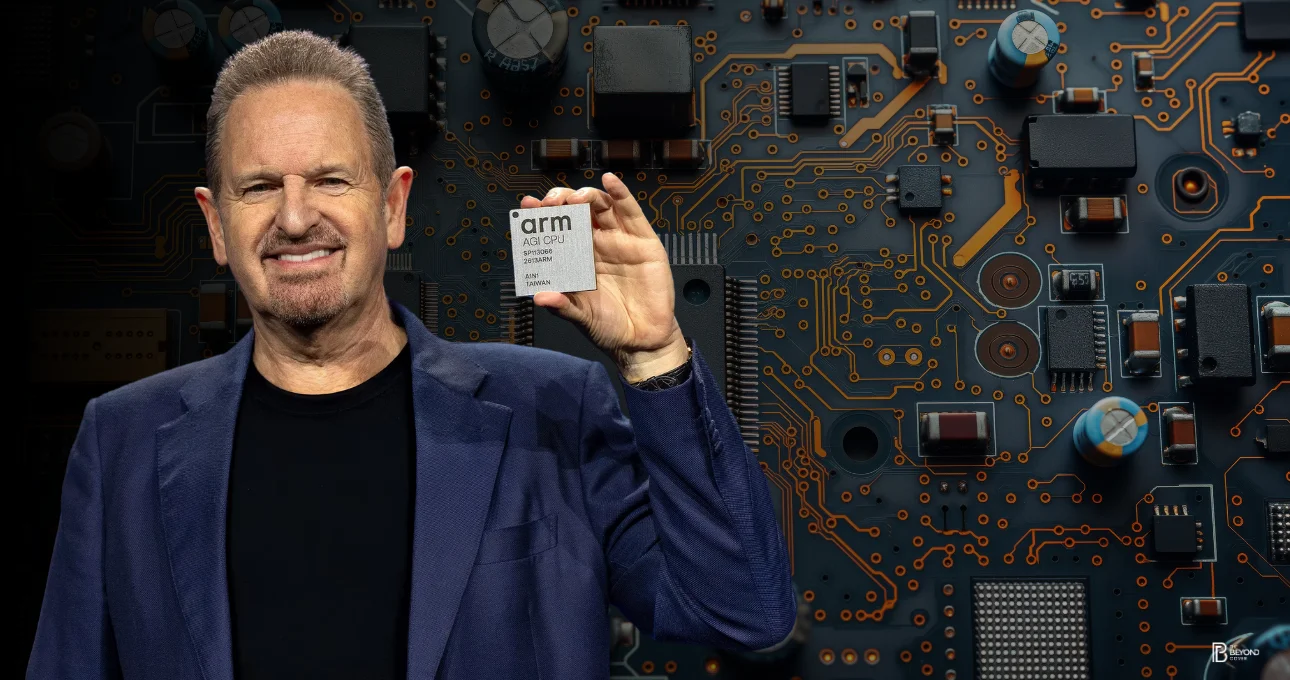

In a pivotal move that could alter the landscape of AI infrastructure, Arm has revealed its initial production silicon, the AGI CPU—signalling a major departure from merely licensing chip designs to offering complete processors. This introduction places Arm directly into the competitive AI data center arena, aiming at the next wave of agentic AI tasks with claims of over twofold rack-level performance compared to conventional x86 setups, alongside substantial gains in power conservation.

A 35-Year Model Shifted: Arm Moves to Selling Silicon.

For more than thirty years, Arm has fueled the semiconductor sector by providing its architecture to prominent firms like Apple and NVIDIA via licensing agreements. However, with the AGI CPU, Arm is redefining its own operational model.

Announced on March 23, 2026, this new chip is manufactured by TSMC using cutting-edge 3nm fabrication techniques, demonstrating Arm’s intent to directly contend with established silicon makers by providing finished hardware solutions.

The AGI CPU is specifically engineered for hyperscale AI environments, where it acts as the primary controller—managing specialized accelerators, optimizing data flow, and coordinating intelligent AI entities across vast computing arrays.

Tailored for Agentic AI: Performance Meets Energy Savings

Central to Arm’s advancement is its focus on agentic AI—a burgeoning methodology where autonomous systems can plan, reason, and execute autonomously. These needs demand continuous parallel computation across numerous cores without encountering delay bottlenecks.

The AGI CPU addresses this demand with:

- Neoverse V3 control units (Armv9.2 design)

- Support for bfloat16 and INT8 AI operations

- 6GB/s memory throughput per unit

- A design prioritizing low latency and high data movement

Unlike older x86 designs, the AGI CPU strips away unnecessary overhead, allowing for predictable scaling and efficient operation under constant load.

Vast Scale: Denser Racks, Greater Output

Arm’s AGI CPU is designed for maximum component density within AI data centers:

- Air-cooled 36kW racks: Accommodating up to 8,160 cores (30 modules, twin-chip configuration)

- Liquid-cooled 200kW racks: Exceeding 45,000 cores, supported by hardware from Supermicro

This scalability enables organizations to fit more processing power into fewer physical footprints, markedly boosting performance per watt metrics—a crucial aspect as global data centers face growing energy limitations.

Specifications Indicating Serious Rivalry

The highest-specification AGI CPU variant offers performance suited for enterprise applications:

- 136 Neoverse V3 units

- 3.2 GHz operational frequency

- 300W Thermal Design Power

- 128MB shared system cache (SLC)

- 12-channel DDR5-8800 memory interface

- 96 PCIe Gen6 / CXL 3.0 pathways

Initial performance tests suggest more than double the rack-level output of leading x86 platforms, maintaining full performance without overheating and utilizing all processing threads during extended tasks. Arm projects this could result in savings up to \$10 billion in capital expenditure for deployments at the gigawatt scale.

The chip is already incorporated into standard reference hardware such as the OCP DC-MHS dual-socket server, obtainable through partners including ASRock Rack, Lenovo, and Supermicro.

Key Partnerships Bolstering Acceptance

Arm is not entering this domain alone. The AGI CPU was developed collaboratively with Meta as a primary associate, ensuring smooth integration with its MTIA accelerators to facilitate gigawatt-scale AI rollouts.

A developing network of collaborators includes:

- OpenAI (for orchestration applications)

- Cloudflare

- Cerebras Systems

- F5

- SAP

- SK Telecom

The platform also aligns with an environment similar to AWS Graviton, allowing for swift deployment and interoperability in cloud settings.

Challenging a \$100B+ Market Segment

With this debut, Arm is targeting the \$100 billion-plus AI infrastructure sector, putting direct pressure on established names like Intel and AMD—particularly in areas of efficiency and scalability.

As energy limitations become a defining hurdle for contemporary data centers, Arm CEO Rene Haas is banking on “efficiency on a large scale” as the firm’s primary competitive advantage. The AGI CPU is especially well-suited for AI tasks heavily reliant on inference, where maximizing accelerator utilization is paramount to achieving top performance.

A New Chapter for Arm—and AI Infrastructure Systems

Arm’s pivot from intellectual property licensing to manufacturing physical silicon is more than just a new product release—it represents a fundamental strategic evolution. By overseeing both the architectural design and the physical hardware, the company is positioning itself at the heart of the AI processing stack.

With subsequent chips already mapped out in the Neoverse development plan, the AGI CPU has the potential to establish a new benchmark for how AI infrastructure is constructed—more streamlined, quicker, and significantly more economical.

The underlying message is unmistakable: Arm is no longer just supporting the ecosystem—it is now striving to direct its evolution.