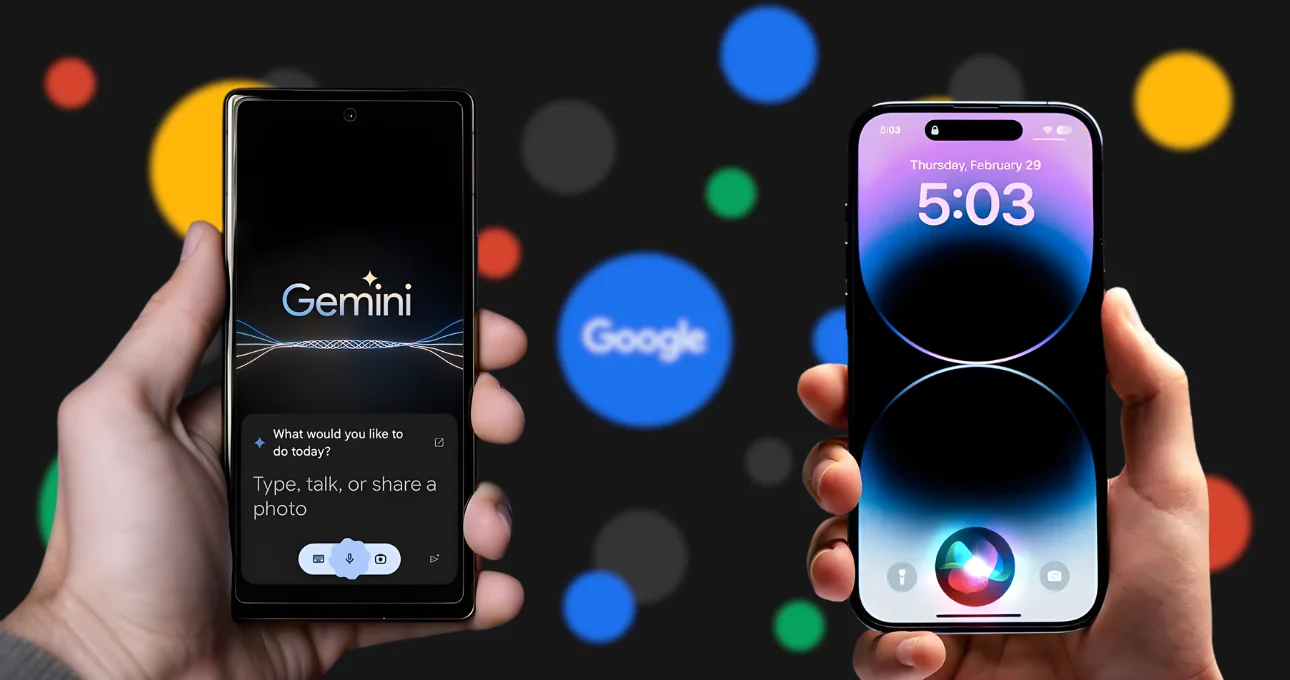

Apple’s AI Wake-Up Call: Siri Gets a Gemini Boost

Apple has officially turned to Google’s Gemini AI to strengthen Siri and power upcoming Apple Intelligence features—a move that signals a decisive shift in the company’s generative AI strategy. After months of speculation and cautious rollouts, Apple’s partnership with Google suggests it is ready to accelerate its AI ambitions rather than build everything in isolation.

For a company known for vertical integration and in-house development, choosing Gemini is a notable departure. But it also reflects a pragmatic reality: generative AI has entered a phase where speed, scale, and model maturity matter as much as long-term control.

Why Apple Chose Gemini

Apple’s AI approach has historically prioritized privacy, on-device processing, and user trust. While this philosophy has earned loyalty, it also slowed Apple’s response to the explosive growth of generative AI led by OpenAI, Google, and Anthropic.

Google’s Gemini models—especially Gemini 1.5—offer advanced multimodal reasoning, long-context understanding, and strong performance across text, code, and vision. By integrating Gemini into Siri and Apple Intelligence, Apple gains immediate access to a proven large language model without waiting years for internal alternatives to mature.

Crucially, Apple is expected to maintain its privacy-first architecture. Sensitive requests may still be handled on-device or via Apple’s private cloud compute, while more complex generative tasks can be routed through Gemini under strict data controls.

What This Means for Siri

Siri has long been criticized for lagging behind conversational AI assistants. With Gemini under the hood, Siri could finally evolve from a command-based assistant into a context-aware, conversational AI capable of:

- Understanding multi-step requests

- Summarizing messages, emails, and documents

- Generating content across apps

- Offering smarter, more natural responses

This upgrade aligns with Apple’s broader vision for Apple Intelligence, which aims to embed AI deeply across iOS, macOS, and iPadOS rather than positioning it as a standalone chatbot.

A New Phase of Apple Intelligence

Apple Intelligence is expected to focus on assistive AI, not replacement AI. Instead of asking users to adapt to AI tools, Apple wants AI to work quietly in the background—enhancing writing, productivity, search, and personalization across its ecosystem.

Gemini’s integration could power features such as intelligent notifications, contextual suggestions, enhanced Spotlight search, and cross-app automation. For developers, this could unlock new APIs that make AI-native app experiences possible without compromising Apple’s ecosystem rules.

Competition, Not Surrender

Importantly, this partnership does not mean Apple is abandoning its own AI research. Rather, it mirrors Apple’s past strategy with services like Maps and search—partner first, optimize later. Apple continues to invest heavily in proprietary models, and future versions of Apple Intelligence may rely more on in-house AI once performance and efficiency meet its standards.

The move also positions Apple more competitively against Microsoft, which has aggressively embedded OpenAI’s models across Windows, Office, and Copilot.

The Bigger Picture

Apple choosing Google Gemini underscores a larger trend: the AI race is shifting from model bragging rights to real-world integration. Users don’t care who built the model—they care whether it works, respects privacy, and fits seamlessly into their daily workflows.

By combining Apple’s ecosystem dominance with Google’s generative AI expertise, this partnership could redefine how billions of users experience AI—quietly, securely, and everywhere.

For Apple, this isn’t playing catch-up. It’s a strategic reset.