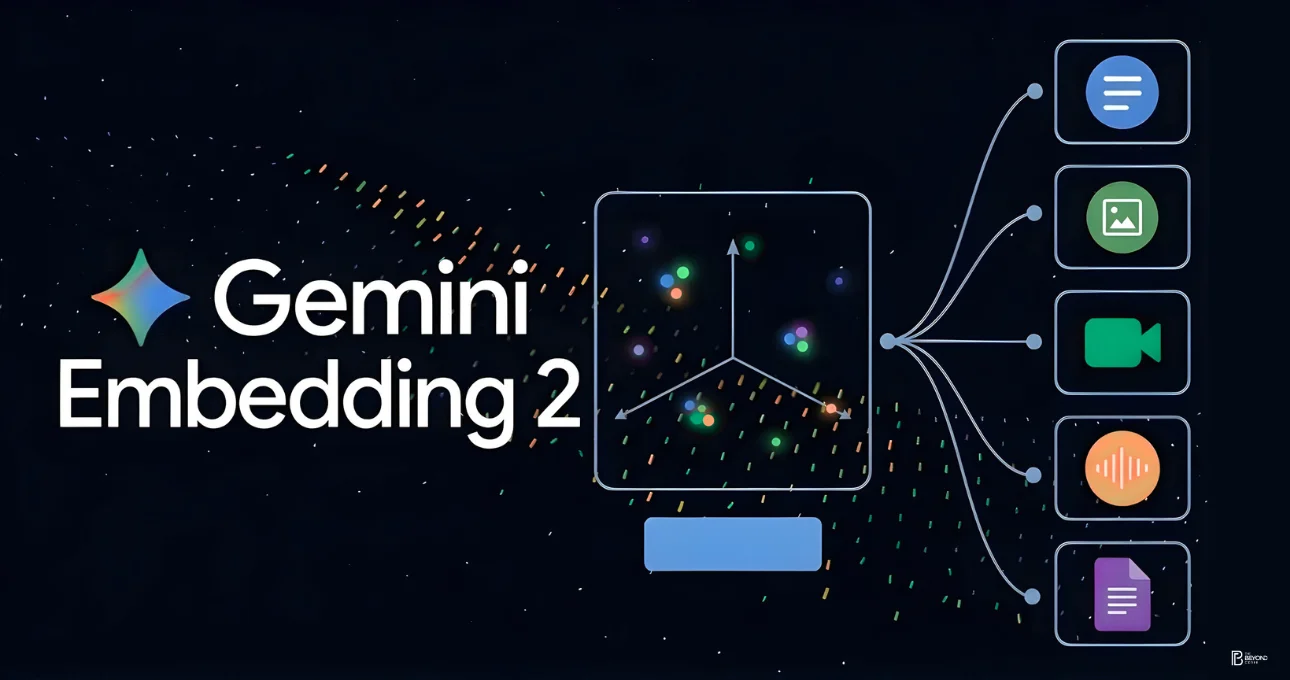

Google’s release of Gemini Embedding 2 was a big step forward for business AI. This solution’s goal is to make it easier for businesses to ask questions and handle information from different types of data. This model, which was shown off in Mountain View on March 18, 2026, is Google’s first natively multimodal embedding system. It can handle text, images, videos, audio, and documents all in one vector space. This breakthrough is expected to greatly improve business search tools, Retrieval-Augmented Generation (RAG), and understanding of semantics in more than 100 languages.

This tool, which was made available earlier on March 10 through the Gemini API and Vertex AI (with the model identifier gemini-embedding-2-preview), does away with the need for separate processing streams for different types of data. Before, companies needed separate encoders for text, images, and videos. Gemini Embedding 2 lets you handle multiple inputs at the same time with just one API request. For example, companies can now look at product options that include specifications, pictures, and technical write-ups, or look at customer service data that includes recorded calls, screen captures, and message threads. Logan Kilpatrick from Google DeepMind said that this is a “major shift” in how to handle complex datasets in the real world.

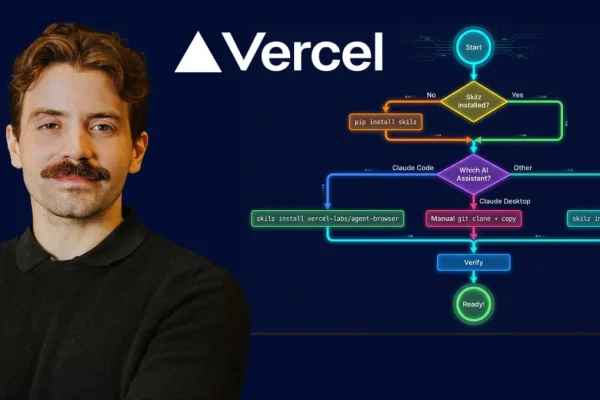

This release comes out as agentic AI is moving forward quickly. Vercel’s Rust-based agent-browser, Sarvam AI’s wide range of voice deployments, and Google’s $32 billion Wiz acquisition all show how important it is to have strong AI foundations. In this setting, Gemini Embedding 2 serves as a core layer, enabling intelligent systems to comprehend and retrieve information across modalities seamlessly.

This model introduces a unified multimodal structure at its core. Older RAG setups had problems because different types of data needed their own processing channels. Gemini Embedding 2 replaces this split method with one 768-dimensional embedding field. It can handle up to 8,192 text tokens, six images per submission, videos that are up to 120 seconds long, direct audio without having to transcribe it first, and PDF files that are up to six pages long. It can handle inputs that are linked together, like linking a safety clip to a manual illustration, which makes retrieval 15–25% more accurate than previous generations.

This gives businesses a lot more power. Customer service reps can ask questions like “leaky faucet warranty” and get instant access to videos, policy documents, and call transcripts that are related to the question. E-commerce sites can match visual searches like “red dress from a picture” with the products they have in stock. In the fields of law and regulation, you can use one search tool to look at contracts, organizational charts, and compliance guides all at once. Integration with Google’s larger cloud infrastructure makes multi-cloud setups even more secure and scalable.

The effect is especially strong in India, where it is important to support many languages. The model fits in well with AI efforts in the region because it supports over 100 languages, such as Hindi, Tamil, and Telugu. Businesses in fields like farming can combine satellite images, weather data, and spoken input to get very accurate information. Public sector platforms can also look at feedback from constituents in different formats to improve both decision-making and service delivery.

Gemini Embedding 2 is easy to set up from a technical point of view. Developers can use it with simple API calls to create unified embeddings that can be stored in vector data stores like Pinecone or Weaviate. It also works with well-known toolkits like LangChain and LlamaIndex, which makes it easy to use in modern AI workflows.

This model is a challenge to other embedding options because it offers real multimodal functionality instead of just text-based methods. It also gets stronger from Google’s other technologies, like its cloud platform and new research in AI.

The preview phase is expected to last until the third quarter of 2026, and there are plans for more improvements, such as better performance and more input options. Early performance comparisons put it among the best models for multilingual retrieval. Businesses say that semantic search is up to 40% faster than older systems.

Tools like Gemini Embedding 2 are becoming more and more important as artificial intelligence moves toward automated and agent-driven processes. It is a key part of the future of corporate intelligence because it makes it easier to understand different types of data.